平衡模型的復雜性和交叉驗證的分數?

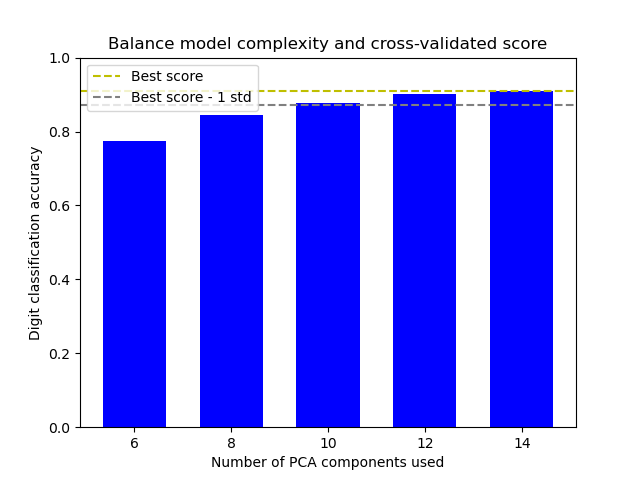

本案例通過在最佳準確性得分的1個標準偏差內找到適當的準確性,同時使PCA組件的數量最小化來平衡模型的復雜性和交叉驗證的得分[1]。

該圖顯示了交叉驗證得分和PCA組件數量之間的權衡。 平衡情況是n_components = 10且精度= 0.88,該范圍落在最佳精度得分的1個標準偏差之內。

[1] Hastie, T., Tibshirani, R.,, Friedman, J. (2001). Model Assessment and Selection. The Elements of Statistical Learning (pp. 219-260). New York, NY, USA: Springer New York Inc..

輸出:

The best_index_ is 2

The n_components selected is 10

The corresponding accuracy score is 0.88

輸入:

# 作者: Wenhao Zhang <wenhaoz@ucla.edu>

print(__doc__)

import numpy as np

import matplotlib.pyplot as plt

from sklearn.datasets import load_digits

from sklearn.decomposition import PCA

from sklearn.model_selection import GridSearchCV

from sklearn.pipeline import Pipeline

from sklearn.svm import LinearSVC

def lower_bound(cv_results):

"""

計算最佳mean_test_scores的1個標準偏差內的下限。

參數

----------

cv_results : 掩碼多維數組組成的字典

同時查看GridSearchCV的屬性cv_results_

返回

-------

浮點數

最佳mean_test_scores的1個標準偏差內的下限。

"""

best_score_idx = np.argmax(cv_results['mean_test_score'])

return (cv_results['mean_test_score'][best_score_idx]

- cv_results['std_test_score'][best_score_idx])

def best_low_complexity(cv_results):

"""

平衡交叉驗證的得分與模型的復雜性

參數

----------

cv_results : 掩碼多維數組組成的字典

同時查看GridSearchCV的屬性cv_results_

返回值

------

整數值

PCA組件最少的模型的索引

其測試分數在bestmean_test_score的1個標準偏差之內

"""

threshold = lower_bound(cv_results)

candidate_idx = np.flatnonzero(cv_results['mean_test_score'] >= threshold)

best_idx = candidate_idx[cv_results['param_reduce_dim__n_components']

[candidate_idx].argmin()]

return best_idx

pipe = Pipeline([

('reduce_dim', PCA(random_state=42)),

('classify', LinearSVC(random_state=42, C=0.01)),

])

param_grid = {

'reduce_dim__n_components': [6, 8, 10, 12, 14]

}

grid = GridSearchCV(pipe, cv=10, n_jobs=1, param_grid=param_grid,

scoring='accuracy', refit=best_low_complexity)

X, y = load_digits(return_X_y=True)

grid.fit(X, y)

n_components = grid.cv_results_['param_reduce_dim__n_components']

test_scores = grid.cv_results_['mean_test_score']

plt.figure()

plt.bar(n_components, test_scores, width=1.3, color='b')

lower = lower_bound(grid.cv_results_)

plt.axhline(np.max(test_scores), linestyle='--', color='y',

label='Best score')

plt.axhline(lower, linestyle='--', color='.5', label='Best score - 1 std')

plt.title("Balance model complexity and cross-validated score")

plt.xlabel('Number of PCA components used')

plt.ylabel('Digit classification accuracy')

plt.xticks(n_components.tolist())

plt.ylim((0, 1.0))

plt.legend(loc='upper left')

best_index_ = grid.best_index_

print("The best_index_ is %d" % best_index_)

print("The n_components selected is %d" % n_components[best_index_])

print("The corresponding accuracy score is %.2f"

% grid.cv_results_['mean_test_score'][best_index_])

plt.show()

腳本的總運行時間:(0分鐘5.453秒)